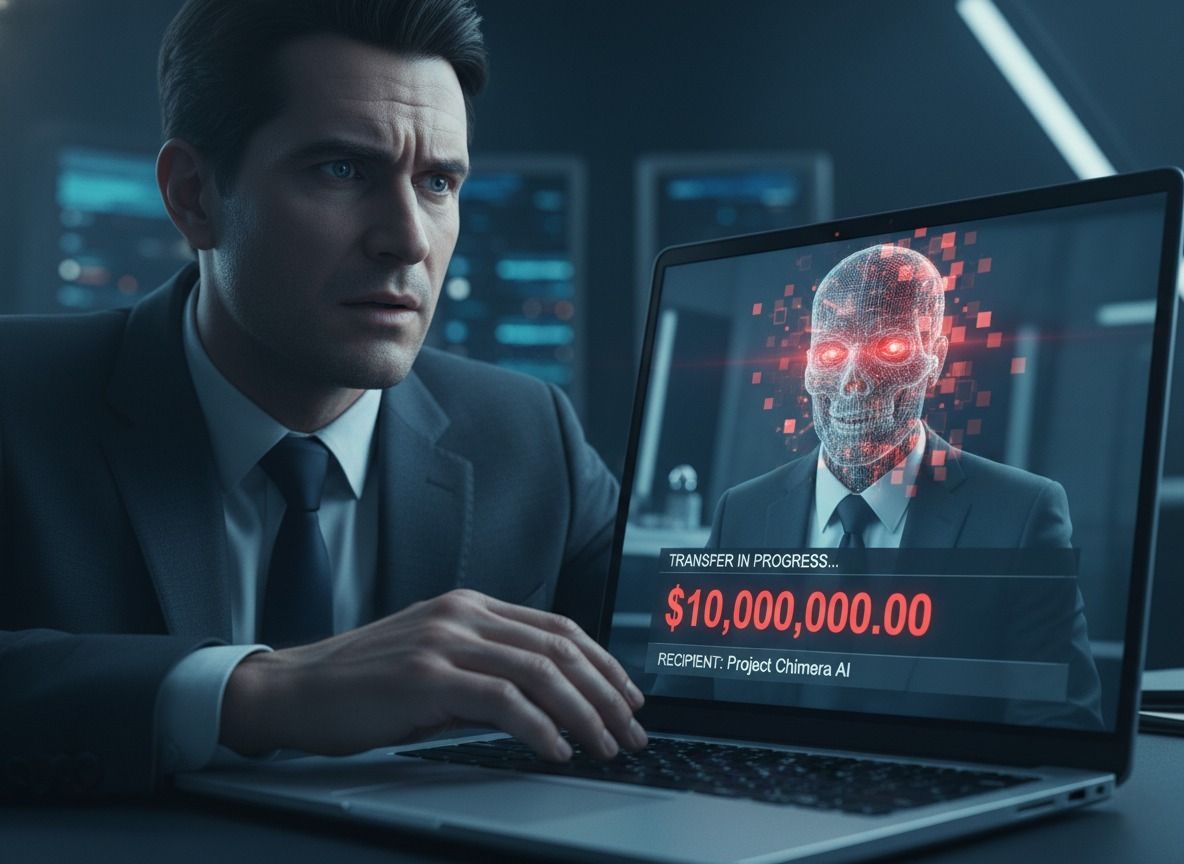

Consider a video meeting with your CEO. The scammers look right. They sound right. They require a wire transfer that is urgent and confidential. But nothing is real. This is deepfake phishing. Millions are already costing companies.

This is the ultimate weapon of a social engineer this new AI threat. It takes advantage of our natural faith in video and voice. It works like this, and how your IT defenses have to change.

The Techniques of a Digital Reflection

What does a scammer do to clone an executive? The way is very frighteningly easy. They begin with scraping of data. Videos posted on the websites of companies or posted on LinkedIn are the ideal training data used by AI models. All that is required is a few minutes of audio.

Next, there is the synthesis stage. The fake content is produced by sophisticated AI. The last one is the psychological play. Businessmen who deceive foster a sense of pressure to avoid rational thinking.

- Nightmare of a CISO: It has become available and affordable. It is no longer about amateurish emails. We battle highly created illusions and that is all, writes a cybersecurity researcher with BleepingComputer.

AI-powered Real-World Heists

Take the example of the 35 million Hong Kong. One of the deepfakes worked the video call with a finance worker. The virtual replications were so true that they approved several transactions. It was not just a hack into email. It was a multi-player digital performance.

In another case in the UK, an executive of an energy company moved 243000 dollars. It was requested by a deep fake audio replica of the voice of his CEO. The employee claimed that the voice was the same as that of his boss.

- The Economic Cost: According to FBI reports on BEC scams including deepfake vishing scams, the scams caused more than 2.9 billion dollars in losses in 2023. This figure is expected to shoot up.

Why the AI Lie is in Your Head

This innovation in IT threat succeeds because it is hacking human psychology and not merely software. It is how we are designed to believe in authority figures. An immediate instruction by a superior causes a compliance reaction. The presence of urgency kills critical thinking.

The problem lies in the very medium. Years of our lives we have been taught to be suspicious of unfamiliar emails. But a live video feed? Our minds are not conditioned to doubt that fact. The uncanny valley is narrowing the gap. The counterfeits are now so good enough to pass scrutiny.

Protecting Yourself: Building Your Human Firewall

So, what can you do? This will not be the salvation of technology. You need a cultural shift. Begin with draconian inspection measures. Any request which is out of the ordinary in either finance or data should be verified through separate channel, which has been established previously.

Imagine it to be some sort of secret handshake. After request make a call to a known number. Use a codeword. This out of band verification disrupts this chain of the scammer.

- Actionable Insight: “Have your teams get trained to perceive the process of verification as a responsibility and not disrespect. Encourage employees to question any request regardless of the origin and this is the recommendation given by a security lead on CSO Online.

The first and most efficient defense is the training of the employees. Constant, hardcore simulations are important. Train the new verification procedures until they are part of the muscle.

AI Arms Race: Detection vs. Deception

Can we use AI to fight AI? Absolutely. Deepfake detection tools are seen as having a new niche. These solutions examine minute digital imprints. They seek unusual blinking patterns of the eyes or audio waveforms.

It is however, a cat-and-mouse game. Each new detection method produces an improved generation of fakes. Use of detection software by itself is a defective strategy. Commanders must integrate it into the larger, human-centric defense strategy

Your Guide to Fighting Synthetic Fraud

It is the age of believing your eyes and ears. Deepfake phishing signifies a paradigm of cyber threats. It combines the latest AI with the classical manipulation with the psyche.

It is life or death to your company and how you respond. Modernise your security policies in the present day. Introduce the requirement of multiple factors verification of all sensitive actions. Above all else, inculcate the culture of questioning authority as a prerequisite of security.

And you will wait to make a mistake of 35 million dollars? Or shall the human firewall be constructed, that sizes the synthetic swindler at the gate? The choice is binary. The time to decide is now.